If you have ever been stuck in bumper-to-bumper traffic after a long day at work, you have probably daydreamed about kicking back, closing your eyes, and letting your car do the driving. For years, the auto industry has promised us that this exact scenario is just around the corner. Today, we are closer to that reality than ever before, but the transition from human drivers to self driving cars is proving to be much more complicated than simply flipping a switch.

When we look at the streets right now, we see a fascinating mix of traditional driving and futuristic tech. You might spot a robotaxi smoothly navigating a busy intersection in a major city, while your own car might struggle to simply keep itself centered in a lane during a rainstorm. The gap between experimental testing and everyday consumer use is still significant.

As someone who closely follows the automotive tech space, I want to cut through the hype. Let’s look at where autonomous vehicles actually stand today, how the underlying tech works in real life, and what hurdles we still need to clear before we can truly let go of the steering wheel.

The Slow Evolution Toward Fully Autonomous Vehicles

To understand where we are, it helps to look at how we got here. The push toward hands-free driving did not happen overnight. It has been a slow, steady rollout of features that many of us already use daily.

Think about traditional cruise control from a few decades ago. It just held your speed. Then came adaptive cruise control, which slows your car down if the vehicle in front of you hits the brakes. Next, we got lane-keeping assist, which gently nudges the steering wheel if you drift over the painted lines.

All of these were stepping stones toward advanced autonomous technology. Today, several automakers offer systems that allow for “hands-off, eyes-on” driving on certain mapped highways. This means the car handles the speed, braking, and steering, but you must keep your eyes firmly on the road in case something goes wrong.

The next major leap—which we are currently witnessing in limited rollouts—is “eyes-off” driving. This level of autonomy means that under very specific, restricted conditions, the driver can actually look away to read a book or check their phone. However, in real-world use, this is highly restricted to specific speeds and specific types of roads. We are not yet at the point where you can sleep in the backseat while your personal car drives you across the country.

How Autonomous Technology Actually Sees the Road

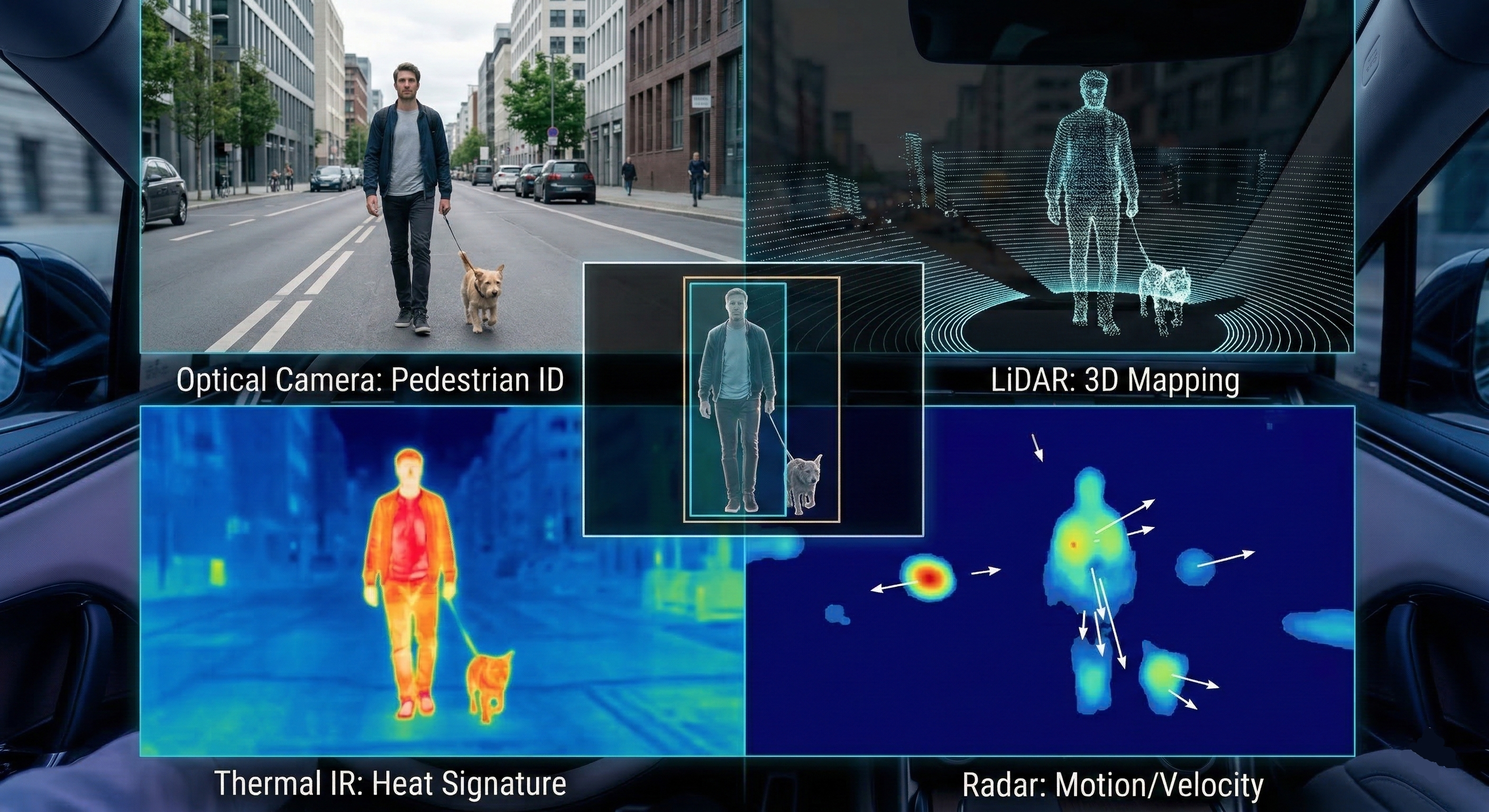

If you have ever wondered how a heavy machine knows exactly when to stop for a red light or dodge a stray dog, the answer lies in a complex combination of hardware and software. Autonomous technology relies on an overlapping web of sensors, simply because no single sensor is perfect.

Here is how the main tools work together:

- Cameras: Just like human eyes, cameras read speed limit signs, spot traffic lights, and identify pedestrians. But just like human eyes, they struggle when the sun is glaring directly into the lens or when heavy fog rolls in.

- Radar: This bounces radio waves off surrounding objects to determine how far away they are and how fast they are moving. Radar is excellent in bad weather, but it doesn’t provide a detailed picture. It knows something is there, but it might not know if it is a stalled car or an empty cardboard box.

- LiDAR: Think of this as a highly detailed, 3D laser scanner. It sits on top of many autonomous vehicles, spinning rapidly to create a real-time, highly accurate 3D map of the environment. LiDAR is incredibly precise, but the hardware has historically been very expensive and bulky.

From practical experience, engineers know that these sensors must constantly talk to the car’s central computer. The artificial intelligence acts as the brain, fusing all this data together in fractions of a second to make driving decisions. When everything works perfectly, the car drives smoothly. But the real world is rarely perfect.

The Safety Question: Can We Trust the Tech?

Whenever the topic of self driving cars comes up, the first question people ask is: Are they safe?

It is a valid concern. When a human makes a mistake and causes a fender bender, we chalk it up to human error. When a computer makes a mistake, it makes national news.

However, if we look at general safety trends and early data from robotaxi fleets, autonomous vehicles actually have a very strong safety record in controlled environments. Computers do not get tired, they do not text while driving, and they do not drive aggressively. Their reaction times to sudden stops are mathematically faster than a human’s. Many users notice that when riding in an autonomous taxi, the vehicle drives incredibly cautiously—sometimes almost too cautiously, stopping for things a human driver would smoothly navigate around.

But the real test of safety is how these vehicles handle “edge cases.” An edge case is an unpredictable, rare scenario. Imagine driving down a neighborhood street and a child’s ball rolls out from between two parked cars, followed immediately by the child. A human driver relies on intuition to instantly understand the context and slam on the brakes. Autonomous technology has to rely on its training data. If the AI has never encountered a specific bizarre scenario—like a flock of birds carrying a piece of reflective debris—it might hesitate or make the wrong choice.

The Tricky “Handover” Problem

One of the most dangerous phases of the transition to fully autonomous driving is something engineers call the “handover.” This happens in current advanced systems when the car is driving itself, encounters a situation it doesn’t understand, and abruptly alerts the human driver to take control.

Think about this logically: If you have been cruising down the highway for 45 minutes with your hands off the wheel and your mind wandering, your situational awareness is practically zero. If the car suddenly beeps frantically and demands that you take the wheel because construction cones have confused its sensors, it takes your brain several seconds to process what is happening. At highway speeds, a few seconds covers a massive amount of distance.

Many safety advocates argue that partial autonomy is actually more dangerous than full manual driving or full computer driving, simply because humans are naturally terrible at acting as passive supervisors. If the car is doing 99% of the work, we tend to tune out, making us unprepared for the 1% of the time we are actually needed.

Navigating the Muddy Waters of Liability

Beyond the technology itself, self driving cars are creating a massive headache for the legal and insurance industries.

If you are manually driving your car and rear-end someone, it is your fault. Your insurance pays for it. But what happens if you are using an approved, hands-free autonomous system and the car rear-ends someone? Are you at fault because you are sitting in the driver’s seat? Or is the automaker at fault because their software failed to apply the brakes?

Currently, laws and insurance policies are struggling to keep up with the pace of innovation. In most places, the human in the driver’s seat is still considered the ultimate responsible party. You are legally expected to take over if the system fails. However, as we move toward vehicles that don’t even have steering wheels or pedals, liability will inevitably shift toward the manufacturers and software developers. This legal gray area is one of the main reasons why consumer deployment is happening at a relatively cautious pace.

What to Expect in the Coming Years

So, when will you be able to buy a fully autonomous car that drops you off at the office and parks itself? Honestly, that reality is still a ways off for the average consumer.

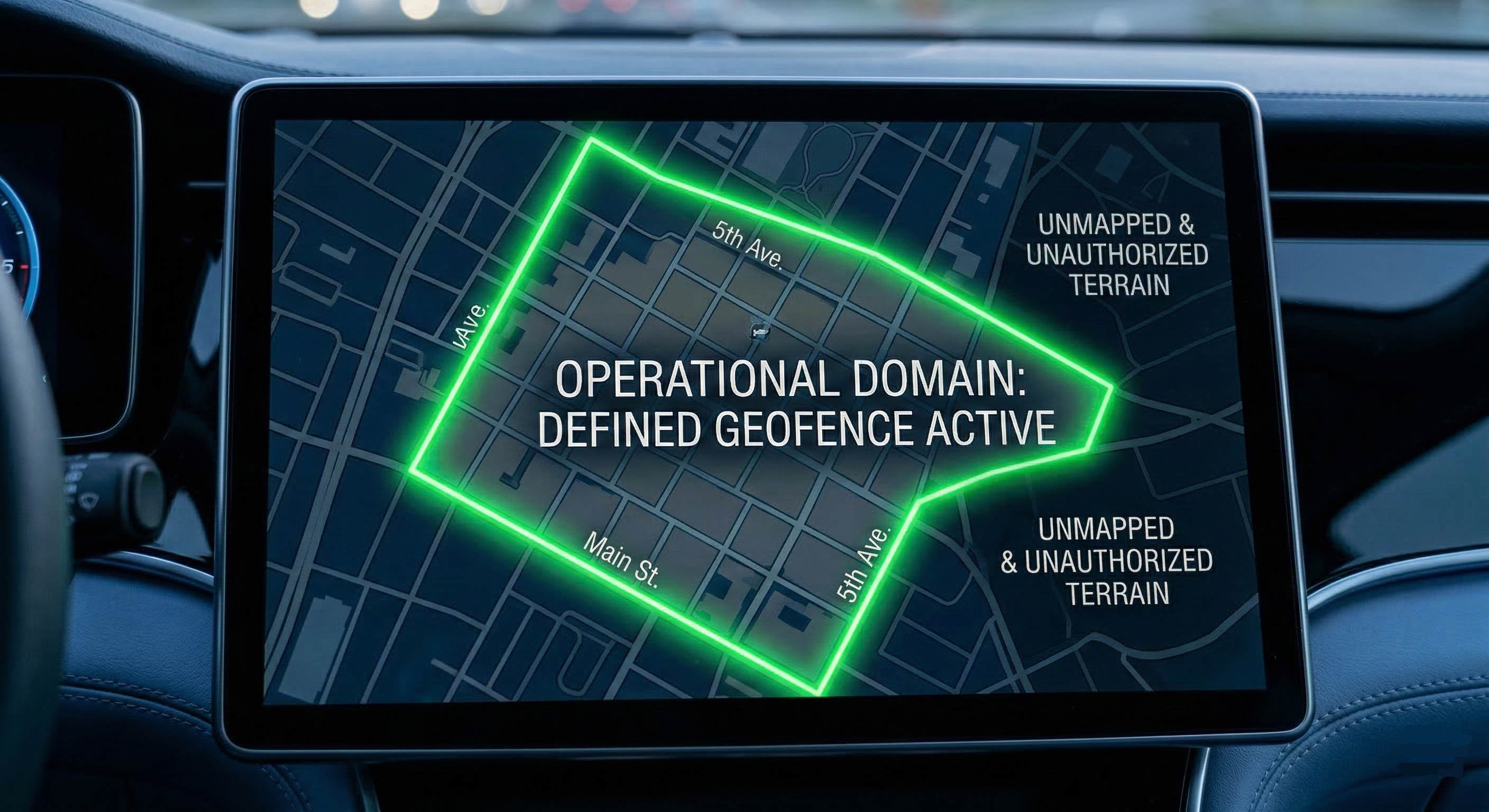

In the near future, the growth of self driving cars will likely remain focused on commercial fleets and robotaxis operating in “geofenced” areas. A geofence is a specific geographic boundary—like downtown in a major city—that has been hyper-mapped. The cars operate brilliantly within that specific zone because the AI knows every curb, stoplight, and crosswalk. But if you take that same car out to an unmapped dirt road in the countryside, it simply won’t know how to function.

We are also seeing automakers shift their focus toward making advanced driver assistance systems flawless before pushing for total autonomy. Features like automatic emergency braking, blind-spot intervention, and advanced highway cruising will become standard on almost all new cars, slowly reducing accidents and saving lives in the background.

Final Thoughts

The journey toward fully autonomous technology is no longer just a science project; it is actively happening on our roads today. However, the path forward requires an immense amount of patience. Building a computer that can beat a human at chess is easy because chess has fixed rules. Driving on an open road alongside distracted humans, unpredictable weather, and crumbling infrastructure is infinitely more complex.

While self driving cars hold the incredible promise of eliminating traffic accidents and giving us our daily commute time back, we still have to bridge the gap between machine logic and human intuition. Until the technology can reliably handle the unpredictable chaos of the real world, human oversight isn’t just a legal requirement—it is a practical necessity.